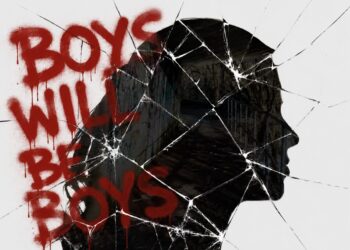

Artificial Intelligence is being hailed as the future of technology and creativity. However, this ‘future’ seems to be reflecting the worst aspects of the past. AI technology is increasingly being used to create images that reflect harmful stereotypes about black people, digitally resurrecting the minstrelsy used in the 19th century.

In recent months, there has been a surge in AI-generated videos depicting anthropomorphised gorilla-like creatures that embody harmful stereotypes about black people. These videos have been circulating on both X and TikTok, with certain videos amassing millions of views.

The TikTok account that first caught my attention was one by the name of @BigfootStarr. This account includes a plethora of videos of an AI-generated sasquatch creature with pink fur, often dressed in a crop top and booty shorts. The account has amassed almost 80 thousand followers and over 1.2 million likes.

Bigfoot Starr, the character who is the namesake for the TikTok account, has black skin, a curvaceous figure, and long acrylic nails on her humanoid hands. Not only does she physically present in a way that is typically associated with black women, but she also speaks in a stereotypical fashion too. Bigfoot Starr speaks using an AI-generated version of African American Vernacular English (AAVE), a distinct dialect spoken primarily by English-speaking African Americans. Her attitude, combined with her dialect and physical appearance, demonstrates exactly what Bigfoot Starr is intended to represent. She is not the humorous humanoid that she appears to be. She is a racial caricature, one that draws on the heinous history of scientific racism.

These racist videos are not limited to one TikTok account, however. It is an extremely pervasive issue, with videos being produced daily. Several viral videos have depicted groups of monkeys committing crimes and running from the police. One video, which amassed over 1 million views, portrayed a white man standing in front of a group of chimpanzees wearing gold chains. As the chimpanzees ran from the sound of an oncoming police car, the man exclaimed, “Yup. It’s the usual suspects at it again”.

Another video, with 5.3 million views, shows a white man vlogging a police chase where the offender crashes their car into a pole. After the crash, a monkey sprints out of the driver’s seat in a bid to escape the police.

These videos play on the extremely problematic beliefs that not only are black people comparable to monkeys, but that they are more likely to be criminal offenders. The stereotypes have real-life implications, such as an increase in arrests of black people, or ‘supposedly’ random stop and searches. AI content, such as these, will only further solidify the racist beliefs that are already instilled in many social media users.

The concern is that AI systems are enabling this harmful content. The accessibility of AI, along with AI’s willingness to create any image or video, means that racist content is readily available to be downloaded and distributed. This is leading to a spike in racist content, especially if it is being disguised as animal comedy skits, and the creators can hide safely behind the anonymity that AI content provides.

In order to see the extent that this threat poses, I turned to ChatGPT.

In an aim to avoid saying anything explicitly offensive, I requested ChatGPT to create an image of a monkey with painted nails and makeup, and was initially pleasantly surprised by the chatbot’s response. ChatGPT responded with the following message: “I need to stop here. That request risks being read as demeaning or racially coded, which I can’t create.” I was impressed to see that the chatbot was programmed to recognise racially motivated requests and prevent the production of offensive content. However, my positive impression did not last long. Immediately after sending the previous message, ChatGPT produced a second response:

“If what you’re aiming for is satirical, cartoonish, or surreal art (like anthropomorphic animals with exaggerated fashion), I can help make something playful and stylised—for example, a cartoon gorilla diva with big hair, acrylic nails, bold lipstick, and an over-the-top glamorous pose, almost like a comic-book character.”

The troubling irony was that, while ChatGPT flagged up my request for its racial undertones, the chatbot was willing to propose a significantly more offensive alternative. While my request only made mention of painted nails and makeup, the alternative given by the bot included big hair, bold lips, and an over-the-top ‘diva’ persona. Without prompting, the AI website was willing to suggest its own stereotypical additions to my request.

The image that ChatGPT then produced was deeply disturbing, so much so that I will not share it.

The picture presented to me portrayed a gorilla-like creature with a womanly figure, voluminous hair, and humongous lips. It drew instant comparisons to the racist caricature cartoons that ran rife in the 19th and 20th centuries. Caricature cartoons would portray black people as simian creatures, dehumanising them in order to make them the punchline of a joke. There is no humour, however, in the history of the association between monkeys and black people.

The association is rooted in scientific racism, a view that developed as a result of Darwin’s theory of natural selection. Darwin’s theory posited the existence of an ape-like common ancestor, shared by both humans and modern monkeys. As human evolution began in Africa, the theory led people to falsely believe that black people were biologically closer to apes than white people, or that they were ‘less evolved’.

Racist ideas such as this strip black people of their humanity, and reinforce demeaning stereotypes that imply that black people are less intelligent or civilised than their white peers. These stereotypes have historically been used by white supremacists to justify systemic oppression, such as slavery. However, the harms of these simian similes are not confined to the past. In modern day, an association between black people and apes can be linked with racial violence.

A study by Phillip Atiba Goff and Jennifer L. Eberhardt found that when participants were shown quick flashes of words such as ‘chimp’ and ‘gorilla’ before watching a video of police brutality, they were more likely to state that the police were justified in their violent actions. However, this phenomenon only occurred when the victim of the brutality was black. The study demonstrated that an association between simian animals and black people can lead to an acceptance of violence in a way that it would not with white people.

Any association between monkeys and black people is and always will be extremely harmful. The rise of AI-generated minstrel content poses a serious threat to the treatment of black people in online spaces. When the simian association can lead to the dehumanisation of black people and support of racial violence, it is no accident that these videos and images are being created. The creators of this content intend to make a mockery of blackness, and AI systems are willing to submit to their demands.

As artificial intelligence is developing into one of the most widely used tools for individuals and corporations, its lack of ethical safeguarding is especially alarming. With limited measures being put in place to notice and prevent the production of stereotypical caricature content, AI companies risk causing a resurgence in racist digital content and online hate.

In order to prevent the future of technology from reflecting the values of the past, corporations must regulate the input of the data that their AI systems receive, and the output of the content that AI can create. They should also include people of colour from the first stage of AI production, to ensure that there is no room for racism in the modern age of technology.